AWS Security Blog

Use AWS Network Firewall to filter outbound HTTPS traffic from applications hosted on Amazon EKS and collect hostnames provided by SNI

October 13, 2022: This post had been updated with diagram of Figure 1: Outbound internet access through Network Firewall from Amazon EKS worker nodes modified.

This blog post shows how to set up an Amazon Elastic Kubernetes Service (Amazon EKS) cluster such that the applications hosted on the cluster can have their outbound internet access restricted to a set of hostnames provided by the Server Name Indication (SNI) in the allow list in the AWS Network Firewall rules. For encrypted web traffic, SNI can be used for blocking access to specific sites in the network firewall. SNI is an extension to TLS that remains unencrypted in the traffic flow and indicates the destination hostname a client is attempting to access over HTTPS.

This post also shows you how to use Network Firewall to collect hostnames of the specific sites that are being accessed by your application. Securing outbound traffic to specific hostnames is called egress filtering. In computer networking, egress filtering is the practice of monitoring and potentially restricting the flow of information outbound from one network to another. Securing outbound traffic is usually done by means of a firewall that blocks packets that fail to meet certain security requirements. One such firewall is AWS Network Firewall, a managed service that you can use to deploy essential network protections for all of your VPCs that you create with Amazon Virtual Private Cloud (Amazon VPC).

Example scenario

You have the option to scan your application traffic by the identifier of the requested SSL certificate, which makes you independent from the relationship of the IP address to the certificate. The certificate could be served from any IP address. Traditional stateful packet filters are not able to follow the changing IP address of the endpoints. Therefore, the host name information that you get from the SNI becomes important in making security decisions. Amazon EKS has gained popularity for running containerized workloads in the AWS Cloud, and you can restrict outbound traffic to only the known hostnames provided by SNI. This post will walk you through the process of setting up the EKS cluster in two different subnets so that your software can use the additional traffic routing in the VPC and traffic filtering through Network Firewall.

Solution architecture

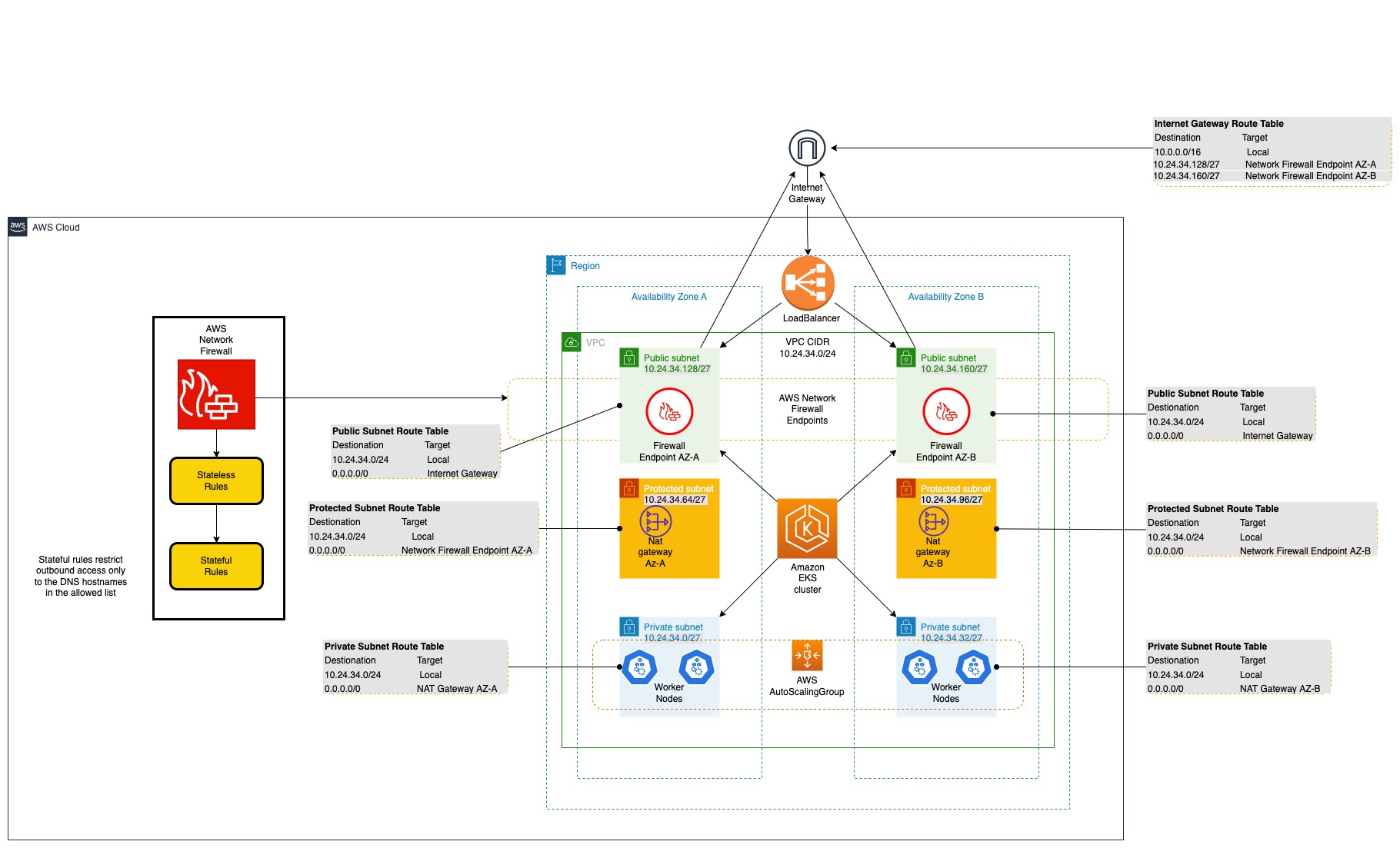

The architecture illustrated in Figure 1 shows a VPC with three subnets in Availability Zone A, and three subnets in Availability Zone B. There are two public subnets where Network Firewall endpoints are deployed, two private subnets where the worker nodes for the EKS cluster are deployed, and two protected subnets where NAT gateways are deployed.

Figure 1: Outbound internet access through Network Firewall from Amazon EKS worker nodes

The workflow in the architecture for outbound access to a third-party service is as follows:

- The outbound request originates from the application running in the private subnet (for example, to https://thinkwithwp.com) and is passed to the NAT gateway in the protected subnet.

- The HTTPS traffic received in the protected subnet is routed to the AWS Network Firewall endpoint in the public subnet.

- The network firewall computes the rules, and either accepts or declines the request to pass to the internet gateway.

- If the request is passed, the application-requested URL (provided by SNI in the non-encrypted HTTPS header) is allowed in the network firewall, and successfully reaches the third-party server for access.

The VPC settings for this blog post follow the recommendation for using public and private subnets described in Creating a VPC for your Amazon EKS cluster in the Amazon EKS User Guide, but with additional subnets called protected subnets. Instead of placing the NAT gateway in a public subnet, it will be placed in the protected subnet, and the Network Firewall endpoints in the public subnet will filter the egress traffic that flows through the NAT gateway. This design pattern adds further checks and could be a recommendation for your VPC setup.

As suggested in Creating a VPC for your Amazon EKS cluster, using the Public and private subnets option allows you to deploy your worker nodes to private subnets, and allows Kubernetes to deploy load balancers to the public subnets. This arrangement can load-balance traffic to pods that are running on nodes in the private subnets. As shown in Figure 1, the solution uses an additional subnet named the protected subnet, apart from the public and private subnets. The protected subnet is a VPC subnet deployed between the public subnet and private subnet. The outbound internet traffic that is routed through the protected subnet is rerouted to the Network Firewall endpoint hosted within the public subnet. You can use the same strategy mentioned in Creating a VPC for your Amazon EKS cluster to place different AWS resources within private subnets and public subnets. The main difference in this solution is that you place the NAT gateway in a separate protected subnet, between private subnets, and place Network Firewall endpoints in the public subnets to filter traffic in the network firewall. The NAT gateway’s IP address is still preserved, and could still be used for adding to the allow list of third-party entities that need connectivity for the applications running on the EKS worker nodes.

To see a practical example of how the outbound traffic is filtered based on the hosted names provided by SNI, follow the steps in the following Deploy a sample section. You will deploy an AWS CloudFormation template that deploys the solution architecture, consisting of the VPC components, EKS cluster components, and the Network Firewall components. When that’s complete, you can deploy a sample app running on Amazon EKS to test egress traffic filtering through AWS Network Firewall.

Deploy a sample to test the network firewall

Follow the steps in this section to perform a sample app deployment to test the use case of securing outbound traffic through AWS Network Firewall.

Prerequisites

The prerequisite actions required for the sample deployment are as follows:

- Make sure you have the AWS CLI installed, and configure access to your AWS account.

- Install and set up the eksctl tool to create an Amazon EKS cluster.

- Copy the necessary CloudFormation templates and the sample eksctl config files from the blog’s Amazon S3 bucket to your local file system. You can do this by using the following AWS CLI S3 cp command.

aws s3 cp s3://aws-security-blog-content/public/sample/803-network-firewall-to-filter-outbound-traffic/config.yaml .

aws s3 cp s3://aws-security-blog-content/public/sample/803-network-firewall-to-filter-outbound-traffic/lambda_function.py .

aws s3 cp s3://aws-security-blog-content/public/sample/803-network-firewall-to-filter-outbound-traffic/network-firewall-eks-collect-all.yaml .

aws s3 cp s3://aws-security-blog-content/public/sample/803-network-firewall-to-filter-outbound-traffic/network-firewall-eks.yaml .Important: This command will download the S3 bucket contents to the current directory on your terminal, so the “.” (dot) in the command is very important.

- Once this is complete, you should be able to see the list of files shown in Figure 2. (The list includes config.yaml, lambda_function.py, network-firewall-eks-collect-all.yaml, and network-firewall-eks.yaml.)

Figure 2: Files downloaded from the S3 bucket

Deploy the VPC architecture with AWS Network Firewall

In this procedure, you’ll deploy the VPC architecture by using a CloudFormation template.

To deploy the VPC architecture (AWS CLI)

- Deploy the CloudFormation template

network-firewall-eks.yaml, which you previously downloaded to your local file system from the Amazon S3 bucket.You can do this through the AWS CLI by using the create-stack command, as follows.

aws cloudformation create-stack --stack-name AWS-Network-Firewall-Multi-AZ \

--template-body file://network-firewall-eks.yaml \

--parameters ParameterKey=NetworkFirewallAllowedWebsites,ParameterValue=".amazonaws.com\,.docker.io\,.docker.com" \

--capabilities CAPABILITY_NAMED_IAMNote: The initially allowed hostnames for egress filtering are passed to the network firewall by using the parameter key

NetworkFirewallAllowedWebsitesin the CloudFormation stack. In this example, the allowed hostnames are.amazonaws.com,.docker.io, anddocker.com. - Make a note of the subnet IDs from the stack outputs of the CloudFormation stack after the status goes to

Create_Complete.

aws cloudformation describe-stacks \

--stack-name AWS-Network-Firewall-Multi-AZNote: For simplicity, the CloudFormation stack name is AWS-Network-Firewall-Multi-AZ, but you can change this name to according to your needs and follow the same naming throughout this post.

To deploy the VPC architecture (console)

In your account, launch the AWS CloudFormation template by choosing the following Launch Stack button. It will take approximately 10 minutes for the CloudFormation stack to complete.

Note: The stack will launch in the N. Virginia (us-east-1) Region. To deploy this solution into other AWS Regions, download the solution’s CloudFormation template, modify it, and deploy it to the selected Region.

Deploy and set up access to the EKS cluster

In this step, you’ll use the eksctl CLI tool to create an EKS cluster.

To deploy an EKS cluster by using the eksctl tool

There are two methods for creating an EKS cluster. Method A uses the eksctl create cluster command without a configuration (config) file. Method B uses a config file.

Note: Before you start, make sure you have the VPC subnet details available from the previous procedure.

Method A: No config file

You can create an EKS cluster without a config file by using the eksctl create cluster command.

- From the CLI, enter the following commands.

eksctl create cluster \

--vpc-private-subnets=<private-subnet-A>,<private-subnet-B> \

--vpc-public-subnets=<public-subnet-A>,<public-subnet-B> - Make sure that the subnets passed to the

--vpc-public-subnetsparameter are protected subnets taken from the VPC architecture CloudFormation stack output. You can verify the subnet IDs by looking at step 2 in the To deploy the VPC architecture section.

Method B: With config file

Another way to create an EKS cluster is by using the following config file, with more options with the name (cluster.yaml in this example).

- Create a file named

cluster.yamlby adding the following contents to it. - Run the following command to create an EKS cluster using the eksctl tool and the

cluster.yamlconfig file.

eksctl create cluster -f cluster.yaml

To set up access to the EKS cluster

-

Before you deploy a sample Kubernetes Pod, make sure you have the

kubeconfigfile set up for the EKS cluster that you created in step 2 of To deploy an EKS cluster by using the eksctl tool. For more information, see Create a kubeconfig for Amazon EKS. You can useeksctlto do this, as follows.eksctl utils write-kubeconfig —cluster filter-egress-traffic-test -

Set the

kubectl contextto the EKS cluster you just created, by using the following command.kubectl config get-contextsFigure 3 shows an example of the output from this command.

Figure 3: kubectl config get-contexts command output

-

Copy the context name from the command output and set the context by using the following command.

kubectl config use-context <NAME-OF-CONTEXT>

To deploy a sample Pod on the EKS cluster

-

Next, deploy a sample Kubernetes Pod in the EKS cluster.

kubectl run -i --tty amazon-linux —image=public.ecr.aws/amazonlinux/amazonlinux:latest shIf you already have a Pod, you can use the following command to get a shell to a running container.

kubectl attach amazon-linux -c alpine -i -t - Now you can test access to a non-allowed website in the AWS Network Firewall stateful rules, using these steps.

- First, install the cURL tool on the sample Pod you created previously. cURL is a command-line tool for getting or sending data, including files, using URL syntax. Because cURL uses the libcurl library, it supports every protocol libcurl supports. On the Pod where you have obtained a shell to a running container, run the following command to install cURL.

apk install curl - Access a website using cURL.

curl -I https://thinkwithwp.comThis gives a timeout error similar to the following.

curl -I https://thinkwithwp.com

curl: (28) Operation timed out after 300476 milliseconds with 0 out of 0 bytes received - Navigate to the AWS CloudWatch console and check the alert logs for Network Firewall. You will see a log entry like the following sample, indicating that the access to https://thinkwithwp.com was blocked.

The error shown here occurred because the hostname

www.amazon.comwas not added to the Network Firewall stateful rules allow list.When you deployed the network firewall in step 1 of the To deploy the VPC architecture procedure, the values provided for the CloudFormation parameter

NetworkFirewallAllowedWebsiteswere just.amazonaws.com,.docker.io,.docker.comand notthinkwithwp.com.

- First, install the cURL tool on the sample Pod you created previously. cURL is a command-line tool for getting or sending data, including files, using URL syntax. Because cURL uses the libcurl library, it supports every protocol libcurl supports. On the Pod where you have obtained a shell to a running container, run the following command to install cURL.

Update the Network Firewall stateful rules

In this procedure, you’ll update the Network Firewall stateful rules to allow the thinkwithwp.com domain name.

To update the Network Firewall stateful rules (console)

- In the AWS CloudFormation console, locate the stack you used to create the network firewall earlier in the To deploy the VPC architecture procedure.

- Select the stack you want to update, and choose Update. In the Parameters section, update the stack by adding the hostname

thinkwithwp.comto the parameterNetworkFirewallAllowedWebsitesas a comma-separated value. See Updating stacks directly in the AWS CloudFormation User Guide for more information on stack updates.

Re-test from the sample pod

In this step, you’ll test the outbound access once again from the sample Pod you created earlier in the To deploy a sample Pod on the EKS cluster procedure.

To test the outbound access to the thinkwithwp.com hostname

- Get a shell to a running container in the sample Pod that you deployed earlier, by using the following command.

kubectl attach amazon-linux -c alpine -i -t - On the terminal where you got a shell to a running container in the sample Pod, run the following cURL command.

curl -I https://thinkwithwp.com - The response should be a success HTTP 200 OK message similar to this one.

curl -Ik https://thinkwithwp.com

HTTP/2 200

content-type: text/html;charset=UTF-8

server: Server

If the VPC subnets are organized according to the architecture suggested in this solution, outbound traffic from the EKS cluster can be sent to the network firewall and then filtered based on hostnames provided by SNI.

Collecting hostnames provided by the SNI

In this step, you’ll see how to configure the network firewall to collect all the hostnames provided by SNI that are accessed by an already running application—without blocking any access—by making use of CloudWatch and alert logs.

To configure the network firewall (console)

- In the AWS CloudFormation console, locate the stack that created the network firewall earlier in the To deploy the VPC architecture procedure.

- Select the stack to update, and then choose Update.

- Choose Replace current template and upload the template network-firewall-eks-collect-all.yaml. (This template should be available from the files that you downloaded earlier from the S3 bucket in the Prerequisites section.) Choose Next. See Updating stacks directly for more information.

To configure the network firewall (AWS CLI)

- Update the CloudFormation stack by using the

network-firewall-eks-collect-all.yamltemplate file that you previously downloaded from the S3 bucket in the Prerequisites section, using the update-stack command as follows.

aws cloudformation update-stack --stack-name AWS-Network-Firewall-Multi-AZ \

--template-body file://network-firewall-eks-collect-all.yaml \

--capabilities CAPABILITY_NAMED_IAM

To check the rules in the AWS Management Console

- In the AWS Management Console, navigate to the Amazon VPC console and locate the AWS Network Firewall tab.

- Select the network firewall that you created earlier, and then select the stateful rule with the name log-all-tls.

- The rule group should appear as shown in Figure 4, indicating that the logs are captured and sent to the Alert logs.

Figure 4: Network Firewall rule groups

To test based on stateful rule

- On the terminal, get the shell for the running container in the Pod you created earlier. If this Pod is not available, follow the instructions in the To deploy a sample Pod on the EKS cluster procedure to create a new sample Pod.

- Run the cURL command to

thinkwithwp.com. It should returnHTTP 200 OK, as follows.

curl -Ik https://thinkwithwp.com/

HTTP/2 200

content-type: text/html;charset=UTF-8

server: Server

date:

------

----------

-------------- - Navigate to the AWS CloudWatch Logs console and look up the Alert logs log group with the name

/AWS-Network-Firewall-Multi-AZ/anfw/alert.You can see the hostnames provided by SNI within the TLS protocol passing through the network firewall. The CloudWatch Alert logs for allowed hostnames in the SNI looks like the following example.

Optionally, you can also create an AWS Lambda function to collect the hostnames that are passed through the network firewall.

To create a Lambda function to collect hostnames provided by SNI (optional)

- Create subscriptions for one or more log streams to invoke a function when logs are created or match an optional pattern.

For more information, see Using Lambda with CloudWatch Logs. Figure 5 is an example architecture in which a Lambda code extracts the hostnames provided by SNI, which can be sent to an Amazon Simple Notification Service (Amazon SNS) topic to send an alert to subscriptions.

Figure 5: Architecture to collect and capture hostnames by using Network Firewall

Sample Lambda code

The sample Lambda code from Figure 5 is shown following, and is written in Python 3. The sample collects the hostnames that are provided by SNI and captured in Network Firewall. Network Firewall logs the hostnames provided by SNI in the CloudWatch Alert logs. Then, by creating a CloudWatch logs subscription filter, you can send logs to the Lambda function for further processing, for example to invoke SNS notifications.

Clean up

In this step, you’ll clean up the infrastructure that was created as part of this solution.

To delete the Kubernetes workloads

- On the terminal, using the kubectl CLI tool, run the following command to delete the sample Pod that you created earlier.

kubectl delete pods amazon-linuxNote: Clean up all the Kubernetes workloads running on the EKS cluster. For example, if the Kubernetes service of type LoadBalancer is deployed, and if the EKS cluster where it exists is deleted, the LoadBalancer will not be deleted. The best practice is to clean up all the deployed workloads.

- On the terminal, using the eksctl CLI tool, delete the created EKS cluster by using the following command.

eksctl delete cluster --name filter-egress-traffic-test

To delete the CloudFormation stack and AWS Network Firewall

- Navigate to the AWS CloudFormation console and choose the stack with the name AWS-Network-Firewall-Multi-AZ.

- Choose Delete, and then at the prompt choose Delete Stack. For more information, see Deleting a stack on the AWS CloudFormation console.

Conclusion

By following the VPC architecture explained in this blog post, you can protect the applications running on an Amazon EKS cluster by filtering the outbound traffic based on the approved hostnames that are provided by SNI in the Network Firewall Allow list.

Additionally, with a simple Lambda function, CloudWatch Logs, and an SNS topic, you can get readable hostnames provided by the SNI. Using these hostnames, you can learn about the traffic pattern for the applications that are running within the EKS cluster, and later create a strict list to allow only the required outbound traffic. To learn more about Network Firewall stateful rules, see Working with stateful rule groups in AWS Network Firewall in the AWS Network Firewall Developer Guide.

If you have feedback about this post, submit comments in the Comments section below. If you have questions about this post, contact AWS Support.

Want more AWS Security news? Follow us on Twitter.