AWS Machine Learning Blog

Derive generative AI-powered insights from ServiceNow with Amazon Q Business

This post shows how to configure the Amazon Q ServiceNow connector to index your ServiceNow platform and take advantage of generative AI searches in Amazon Q. We use an example of an illustrative ServiceNow platform to discuss technical topics related to AWS services.

Intelligent healthcare forms analysis with Amazon Bedrock

In this post, we explore using the Anthropic Claude 3 on Amazon Bedrock large language model (LLM). Amazon Bedrock provides access to several LLMs, such as Anthropic Claude 3, which can be used to generate semi-structured data relevant to the healthcare industry. This can be particularly useful for creating various healthcare-related forms, such as patient intake forms, insurance claim forms, or medical history questionnaires.

Harness the power of AI and ML using Splunk and Amazon SageMaker Canvas

For organizations looking beyond the use of out-of-the-box Splunk AI/ML features, this post explores how Amazon SageMaker Canvas, a no-code ML development service, can be used in conjunction with data collected in Splunk to drive actionable insights. We also demonstrate how to use the generative AI capabilities of SageMaker Canvas to speed up your data exploration and help you build better ML models.

How Deltek uses Amazon Bedrock for question and answering on government solicitation documents

This post provides an overview of a custom solution developed by the AWS Generative AI Innovation Center (GenAIIC) for Deltek, a globally recognized standard for project-based businesses in both government contracting and professional services. Deltek serves over 30,000 clients with industry-specific software and information solutions. In this collaboration, the AWS GenAIIC team created a RAG-based solution for Deltek to enable Q&A on single and multiple government solicitation documents. The solution uses AWS services including Amazon Textract, Amazon OpenSearch Service, and Amazon Bedrock.

Cisco achieves 50% latency improvement using Amazon SageMaker Inference faster autoscaling feature

Webex by Cisco is a leading provider of cloud-based collaboration solutions which includes video meetings, calling, messaging, events, polling, asynchronous video and customer experience solutions like contact center and purpose-built collaboration devices. Webex’s focus on delivering inclusive collaboration experiences fuels our innovation, which leverages AI and Machine Learning, to remove the barriers of geography, language, personality, and familiarity with technology. Its solutions are underpinned with security and privacy by design. Webex works with the world’s leading business and productivity apps – including AWS. This blog post highlights how Cisco implemented faster autoscaling release reference.

How Cisco accelerated the use of generative AI with Amazon SageMaker Inference

This post highlights how Cisco implemented new functionalities and migrated existing workloads to Amazon SageMaker inference components for their industry-specific contact center use cases. By integrating generative AI, they can now analyze call transcripts to better understand customer pain points and improve agent productivity. Cisco has also implemented conversational AI experiences, including chatbots and virtual agents that can generate human-like responses, to automate personalized communications based on customer context. Additionally, they are using generative AI to extract key call drivers, optimize agent workflows, and gain deeper insights into customer sentiment. Cisco’s adoption of SageMaker Inference has enabled them to streamline their contact center operations and provide more satisfying, personalized interactions that address customer needs.

Discover insights from Box with the Amazon Q Box connector

Seamless access to content and insights is crucial for delivering exceptional customer experiences and driving successful business outcomes. Box, a leading cloud content management platform, serves as a central repository for diverse digital assets and documents in many organizations. An enterprise Box account typically contains a wealth of materials, including documents, presentations, knowledge articles, and […]

How Twilio generated SQL using Looker Modeling Language data with Amazon Bedrock

As one of the largest AWS customers, Twilio engages with data, artificial intelligence (AI), and machine learning (ML) services to run their daily workloads. This post highlights how Twilio enabled natural language-driven data exploration of business intelligence (BI) data with RAG and Amazon Bedrock.

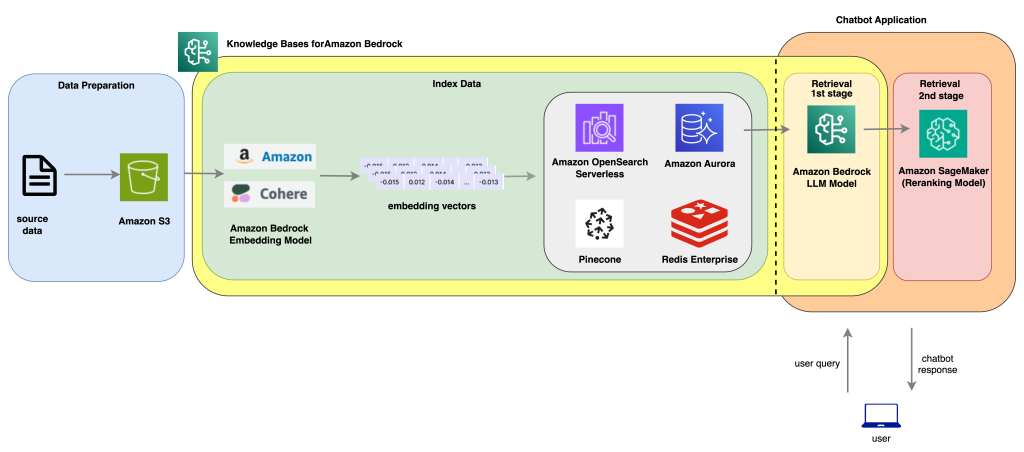

Improve AI assistant response accuracy using Knowledge Bases for Amazon Bedrock and a reranking model

AI chatbots and virtual assistants have become increasingly popular in recent years thanks the breakthroughs of large language models (LLMs). Trained on a large volume of datasets, these models incorporate memory components in their architectural design, allowing them to understand and comprehend textual context. Most common use cases for chatbot assistants focus on a few […]

Automate the machine learning model approval process with Amazon SageMaker Model Registry and Amazon SageMaker Pipelines

This post illustrates how to use common architecture principles to transition from a manual monitoring process to one that is automated. You can use these principles and existing AWS services such as Amazon SageMaker Model Registry and Amazon SageMaker Pipelines to deliver innovative solutions to your customers while maintaining compliance for your ML workloads.