Artificial Intelligence

Category: Intermediate (200)

Migrate from Anthropic’s Claude Sonnet 3.x to Claude Sonnet 4.x on Amazon Bedrock

This post provides a systematic approach to migrating from Anthropic’s Claude 3.5 Sonnet to Claude Sonnet 4.5 on Amazon Bedrock. We examine the key model differences, highlight essential migration considerations, and deliver proven best practices to transform this necessary transition into a strategic advantage that drives measurable value for your organization.

Build trustworthy AI agents with Amazon Bedrock AgentCore Observability

In this post, we walk you through implementation options for both agents hosted on Amazon Bedrock AgentCore Runtime and agents hosted on other services like Amazon Elastic Compute Cloud (Amazon EC2), Amazon Elastic Kubernetes Service (Amazon EKS), AWS Lambda, or alternative cloud providers. We also share best practices for incorporating observability throughout the development lifecycle.

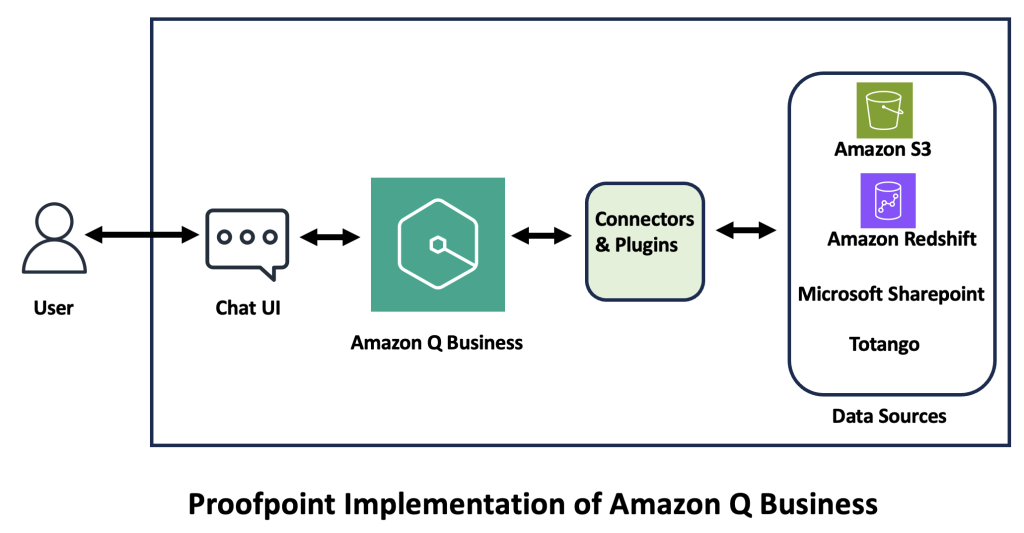

Unlocking the future of professional services: How Proofpoint uses Amazon Q Business

Proofpoint has redefined its professional services by integrating Amazon Q Business, a fully managed, generative AI powered assistant that you can configure to answer questions, provide summaries, generate content, and complete tasks based on your enterprise data. In this post, we explore how Amazon Q Business transformed Proofpoint’s professional services, detailing its deployment, functionality, and future roadmap.

Deploy Amazon Bedrock Knowledge Bases using Terraform for RAG-based generative AI applications

In this post, we demonstrated how to automate the deployment of Amazon Knowledge Bases for RAG applications using Terraform.

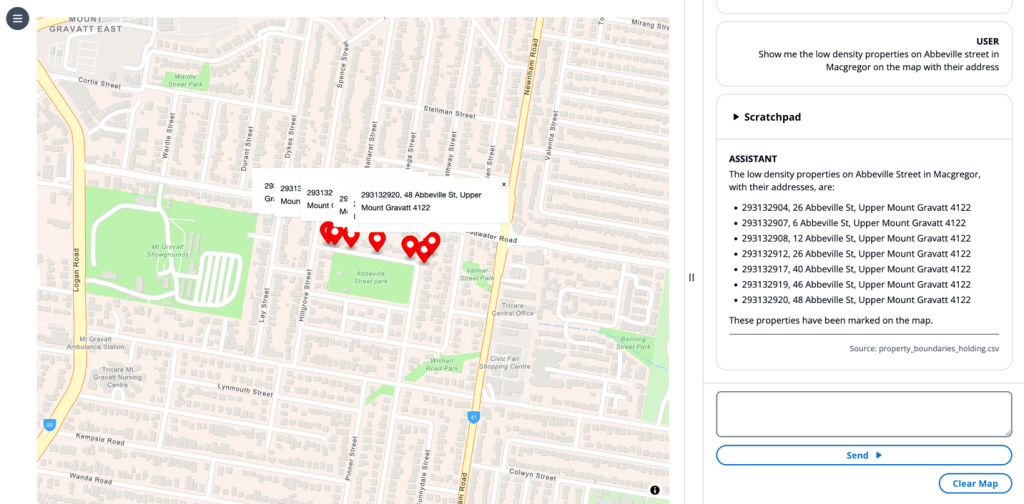

Enhance Geospatial Analysis and GIS Workflows with Amazon Bedrock Capabilities

Applying emerging technologies to the geospatial domain offers a unique opportunity to create transformative user experiences and intuitive workstreams for users and organizations to deliver on their missions and responsibilities. In this post, we explore how you can integrate existing systems with Amazon Bedrock to create new workflows to unlock efficiencies insights. This integration can benefit technical, nontechnical, and leadership roles alike.

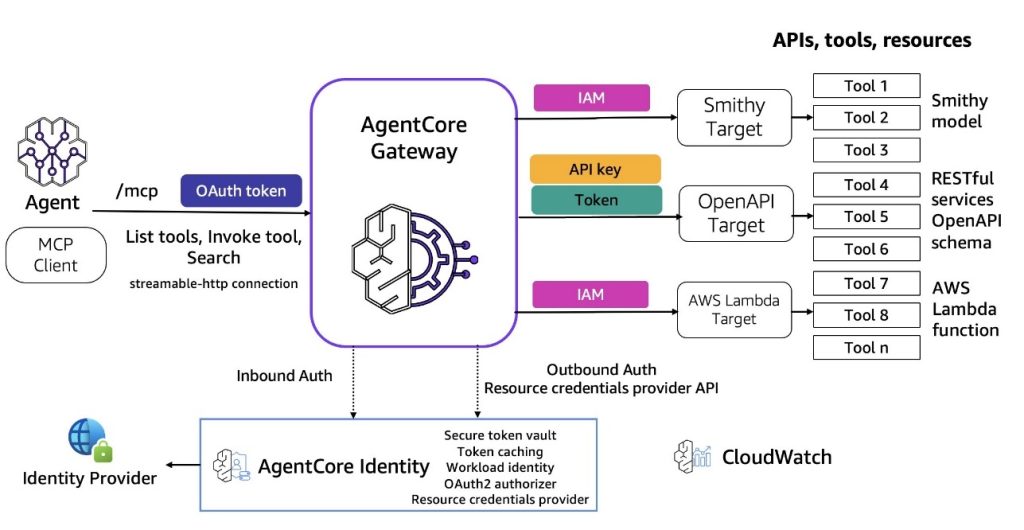

Introducing Amazon Bedrock AgentCore Gateway: Transforming enterprise AI agent tool development

In this post, we discuss Amazon Bedrock AgentCore Gateway, a fully managed service that revolutionizes how enterprises connect AI agents with tools and services by providing a centralized tool server with unified interface for agent-tool communication. The service offers key capabilities including Security Guard, Translation, Composition, Target extensibility, Infrastructure Manager, and Semantic Tool Selection, while implementing sophisticated dual-sided security architecture for both inbound and outbound connections.

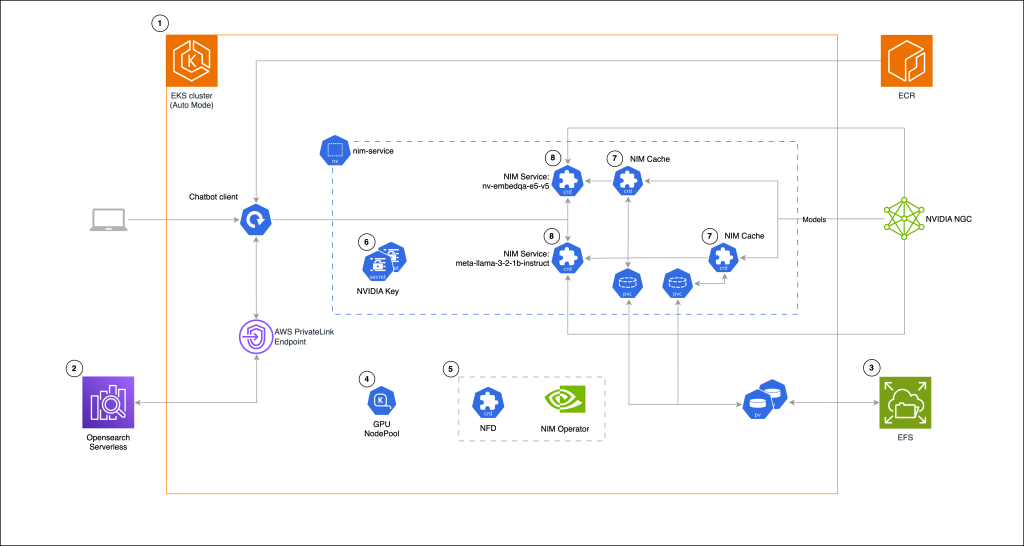

Building a RAG chat-based assistant on Amazon EKS Auto Mode and NVIDIA NIMs

In this post, we demonstrate the implementation of a practical RAG chat-based assistant using a comprehensive stack of modern technologies. The solution uses NVIDIA NIMs for both LLM inference and text embedding services, with the NIM Operator handling their deployment and management. The architecture incorporates Amazon OpenSearch Serverless to store and query high-dimensional vector embeddings for similarity search.

Citations with Amazon Nova understanding models

In this post, we demonstrate how to prompt Amazon Nova understanding models to cite sources in responses. Further, we will also walk through how we can evaluate the responses (and citations) for accuracy.

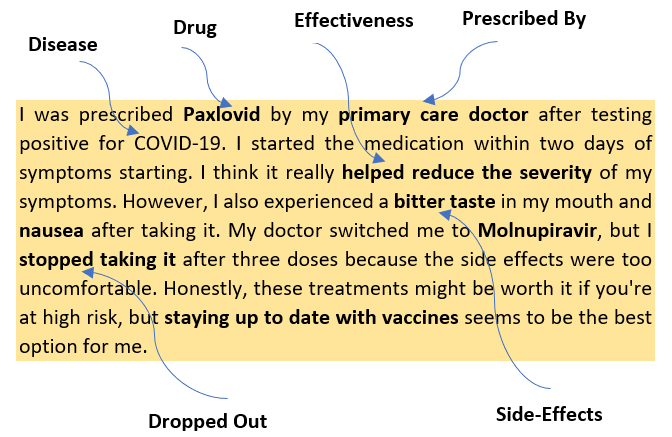

How Indegene’s AI-powered social intelligence for life sciences turns social media conversations into insights

This post explores how Indegene’s Social Intelligence Solution uses advanced AI to help life sciences companies extract valuable insights from digital healthcare conversations. Built on AWS technology, the solution addresses the growing preference of HCPs for digital channels while overcoming the challenges of analyzing complex medical discussions on a scale.

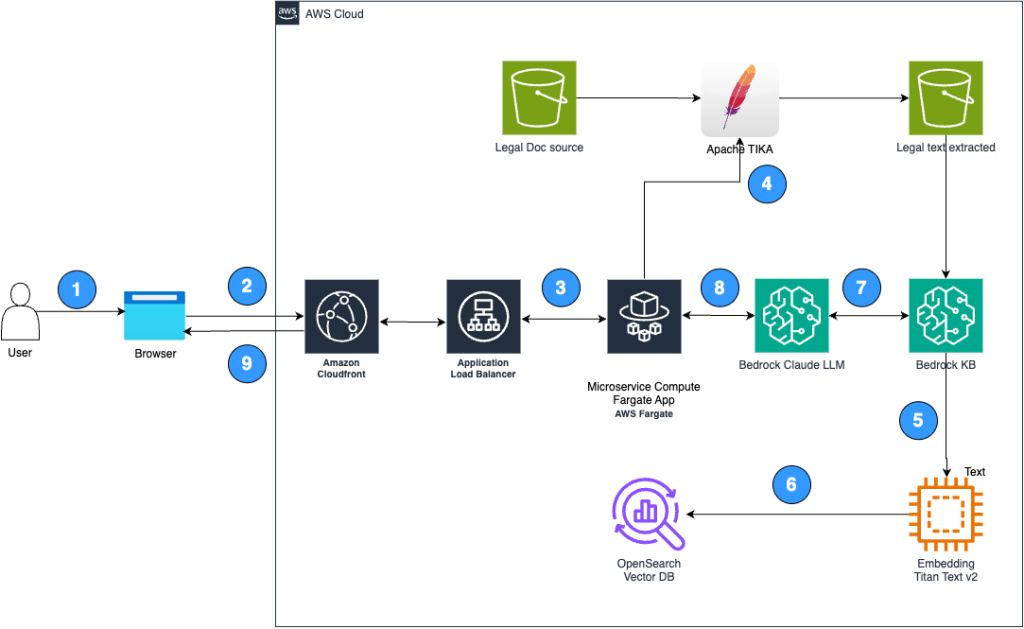

Unlocking enhanced legal document review with Lexbe and Amazon Bedrock

In this post, Lexbe, a legal document review software company, demonstrates how they integrated Amazon Bedrock and other AWS services to transform their document review process, enabling legal professionals to instantly query and extract insights from vast volumes of case documents using generative AI. Through collaboration with AWS, Lexbe achieved significant improvements in recall rates, reaching up to 90% by December 2024, and developed capabilities for broad human-style reporting and deep automated inference across multiple languages.