AWS Machine Learning Blog

Category: Generative AI

Align Meta Llama 3 to human preferences with DPO, Amazon SageMaker Studio, and Amazon SageMaker Ground Truth

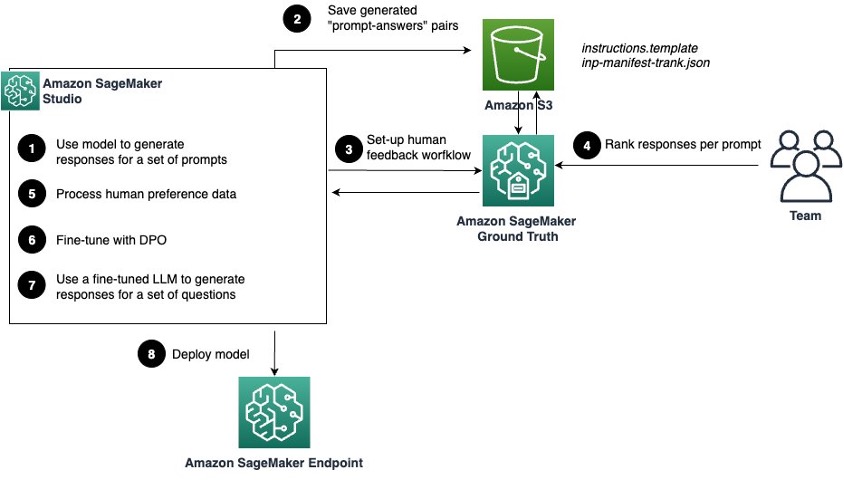

In this post, we show you how to enhance the performance of Meta Llama 3 8B Instruct by fine-tuning it using direct preference optimization (DPO) on data collected with SageMaker Ground Truth.

Exploring data using AI chat at Domo with Amazon Bedrock

In this post, we share how Domo, a cloud-centered data experiences innovator is using Amazon Bedrock to provide a flexible and powerful AI solution.

How Vidmob is using generative AI to transform its creative data landscape

In this post, we illustrate how Vidmob, a creative data company, worked with the AWS Generative AI Innovation Center (GenAIIC) team to uncover meaningful insights at scale within creative data using Amazon Bedrock.

Ground truth curation and metric interpretation best practices for evaluating generative AI question answering using FMEval

In this post, we discuss best practices for working with Foundation Model Evaluations Library (FMEval) in ground truth curation and metric interpretation for evaluating question answering applications for factual knowledge and quality.

Build powerful RAG pipelines with LlamaIndex and Amazon Bedrock

In this post, we show you how to use LlamaIndex with Amazon Bedrock to build robust and sophisticated RAG pipelines that unlock the full potential of LLMs for knowledge-intensive tasks.

Evaluating prompts at scale with Prompt Management and Prompt Flows for Amazon Bedrock

In this post, we demonstrate how to implement an automated prompt evaluation system using Amazon Bedrock so you can streamline your prompt development process and improve the overall quality of your AI-generated content.

Effectively manage foundation models for generative AI applications with Amazon SageMaker Model Registry

In this post, we explore the new features of Model Registry that streamline foundation model (FM) management: you can now register unzipped model artifacts and pass an End User License Agreement (EULA) acceptance flag without needing users to intervene.

Build an ecommerce product recommendation chatbot with Amazon Bedrock Agents

In this post, we show you how to build an ecommerce product recommendation chatbot using Amazon Bedrock Agents and foundation models (FMs) available in Amazon Bedrock.

How Thomson Reuters Labs achieved AI/ML innovation at pace with AWS MLOps services

In this post, we show you how Thomson Reuters Labs (TR Labs) was able to develop an efficient, flexible, and powerful MLOps process by adopting a standardized MLOps framework that uses AWS SageMaker, SageMaker Experiments, SageMaker Model Registry, and SageMaker Pipelines. The goal being to accelerate how quickly teams can experiment and innovate using AI and machine learning (ML)—whether using natural language processing (NLP), generative AI, or other techniques. We discuss how this has helped decrease the time to market for fresh ideas and helped build a cost-efficient machine learning lifecycle.

Build a generative AI image description application with Anthropic’s Claude 3.5 Sonnet on Amazon Bedrock and AWS CDK

In this post, we delve into the process of building and deploying a sample application capable of generating multilingual descriptions for multiple images with a Streamlit UI, AWS Lambda powered with the Amazon Bedrock SDK, and AWS AppSync driven by the open source Generative AI CDK Constructs.