Artificial Intelligence

Category: Intermediate (200)

Demystifying Amazon Bedrock Pricing for a Chatbot Assistant

In this post, we’ll look at Amazon Bedrock pricing through the lens of a practical, real-world example: building a customer service chatbot. We’ll break down the essential cost components, walk through capacity planning for a mid-sized call center implementation, and provide detailed pricing calculations across different foundation models.

Automate enterprise workflows by integrating Salesforce Agentforce with Amazon Bedrock Agents

This post explores a practical collaboration, integrating Salesforce Agentforce with Amazon Bedrock Agents and Amazon Redshift, to automate enterprise workflows.

Responsible AI for the payments industry – Part 1

This post explores the unique challenges facing the payments industry in scaling AI adoption, the regulatory considerations that shape implementation decisions, and practical approaches to applying responsible AI principles. In Part 2, we provide practical implementation strategies to operationalize responsible AI within your payment systems.

Responsible AI for the payments industry – Part 2

In Part 1 of our series, we explored the foundational concepts of responsible AI in the payments industry. In this post, we discuss the practical implementation of responsible AI frameworks.

Discover insights from Microsoft Exchange with the Microsoft Exchange connector for Amazon Q Business

Amazon Q Business is a fully managed, generative AI-powered assistant that helps enterprises unlock the value of their data and knowledge. With Amazon Q Business, you can quickly find answers to questions, generate summaries and content, and complete tasks by using the information and expertise stored across your company’s various data sources and enterprise systems. […]

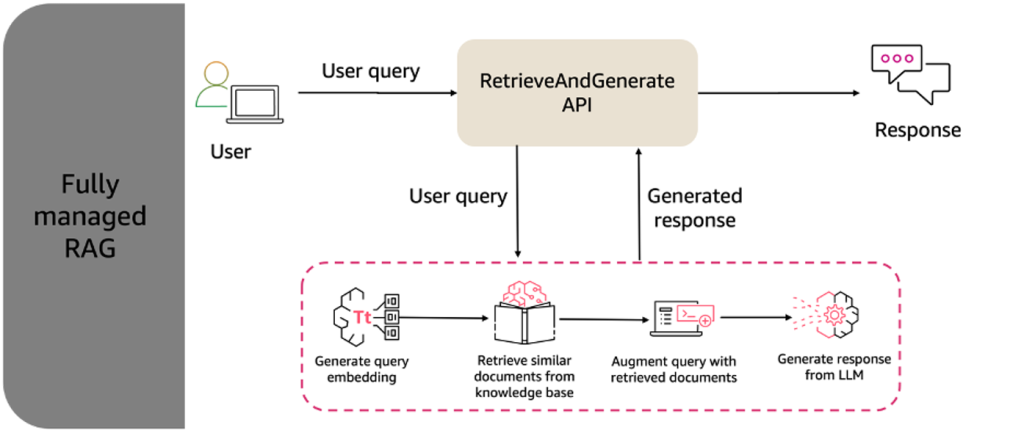

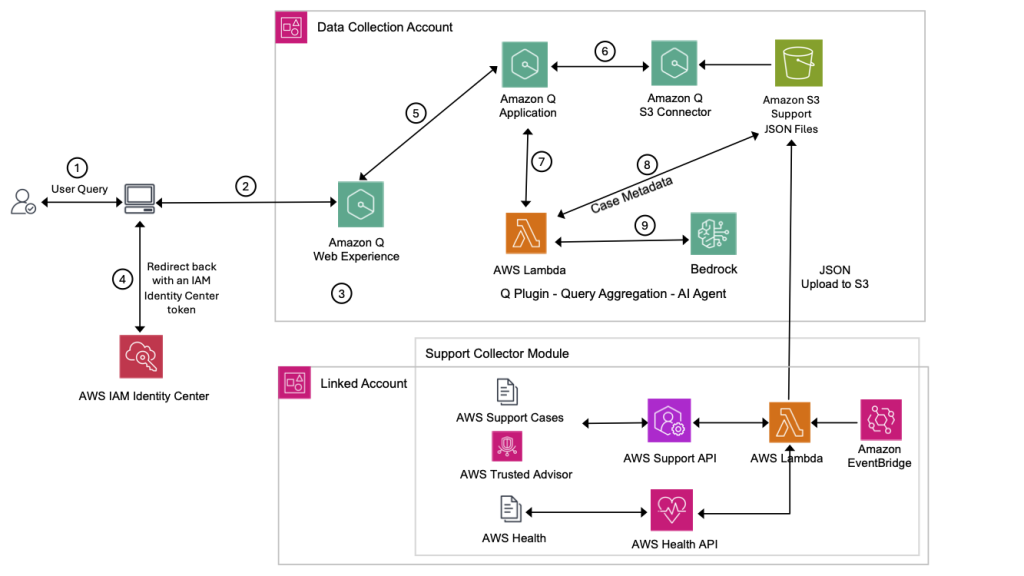

AI agents unifying structured and unstructured data: Transforming support analytics and beyond with Amazon Q Plugins

Learn how to enhance Amazon Q with custom plugins to combine semantic search capabilities with precise analytics for AWS Support data. This solution enables more accurate answers to analytical questions by integrating structured data querying with RAG architecture, allowing teams to transform raw support cases and health events into actionable insights. Discover how this enhanced architecture delivers exact numerical analysis while maintaining natural language interactions for improved operational decision-making.

Fine-tune and deploy Meta Llama 3.2 Vision for generative AI-powered web automation using AWS DLCs, Amazon EKS, and Amazon Bedrock

In this post, we present a complete solution for fine-tuning and deploying the Llama-3.2-11B-Vision-Instruct model for web automation tasks. We demonstrate how to build a secure, scalable, and efficient infrastructure using AWS Deep Learning Containers (DLCs) on Amazon Elastic Kubernetes Service (Amazon EKS).

Customize Amazon Nova in Amazon SageMaker AI using Direct Preference Optimization

At the AWS Summit in New York City, we introduced a comprehensive suite of model customization capabilities for Amazon Nova foundation models. Available as ready-to-use recipes on Amazon SageMaker AI, you can use them to adapt Nova Micro, Nova Lite, and Nova Pro across the model training lifecycle, including pre-training, supervised fine-tuning, and alignment. In this post, we present a streamlined approach to customize Nova Micro in SageMaker training jobs.

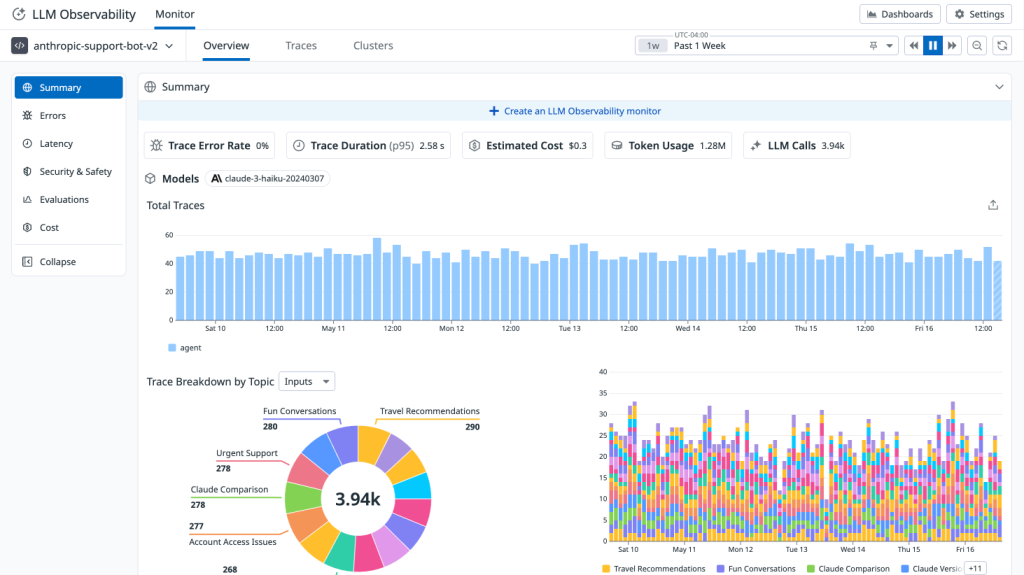

Monitor agents built on Amazon Bedrock with Datadog LLM Observability

We’re excited to announce a new integration between Datadog LLM Observability and Amazon Bedrock Agents that helps monitor agentic applications built on Amazon Bedrock. In this post, we’ll explore how Datadog’s LLM Observability provides the visibility and control needed to successfully monitor, operate, and debug production-grade agentic applications built on Amazon Bedrock Agents.

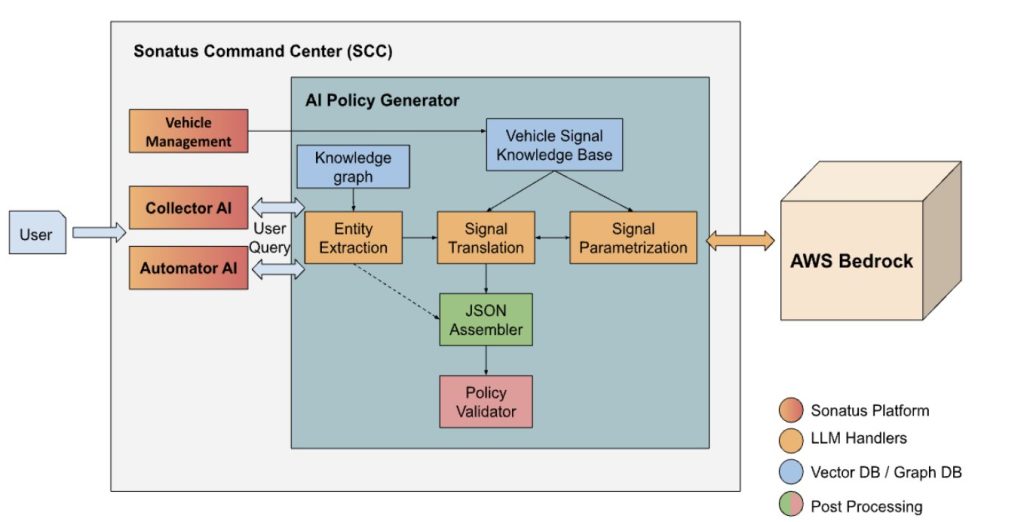

Build AI-driven policy creation for vehicle data collection and automation using Amazon Bedrock

Sonatus partnered with the AWS Generative AI Innovation Center to develop a natural language interface to generate data collection and automation policies using generative AI. This innovation aims to reduce the policy generation process from days to minutes while making it accessible to both engineers and non-experts alike. In this post, we explore how we built this system using Sonatus’s Collector AI and Amazon Bedrock. We discuss the background, challenges, and high-level solution architecture.